Findings

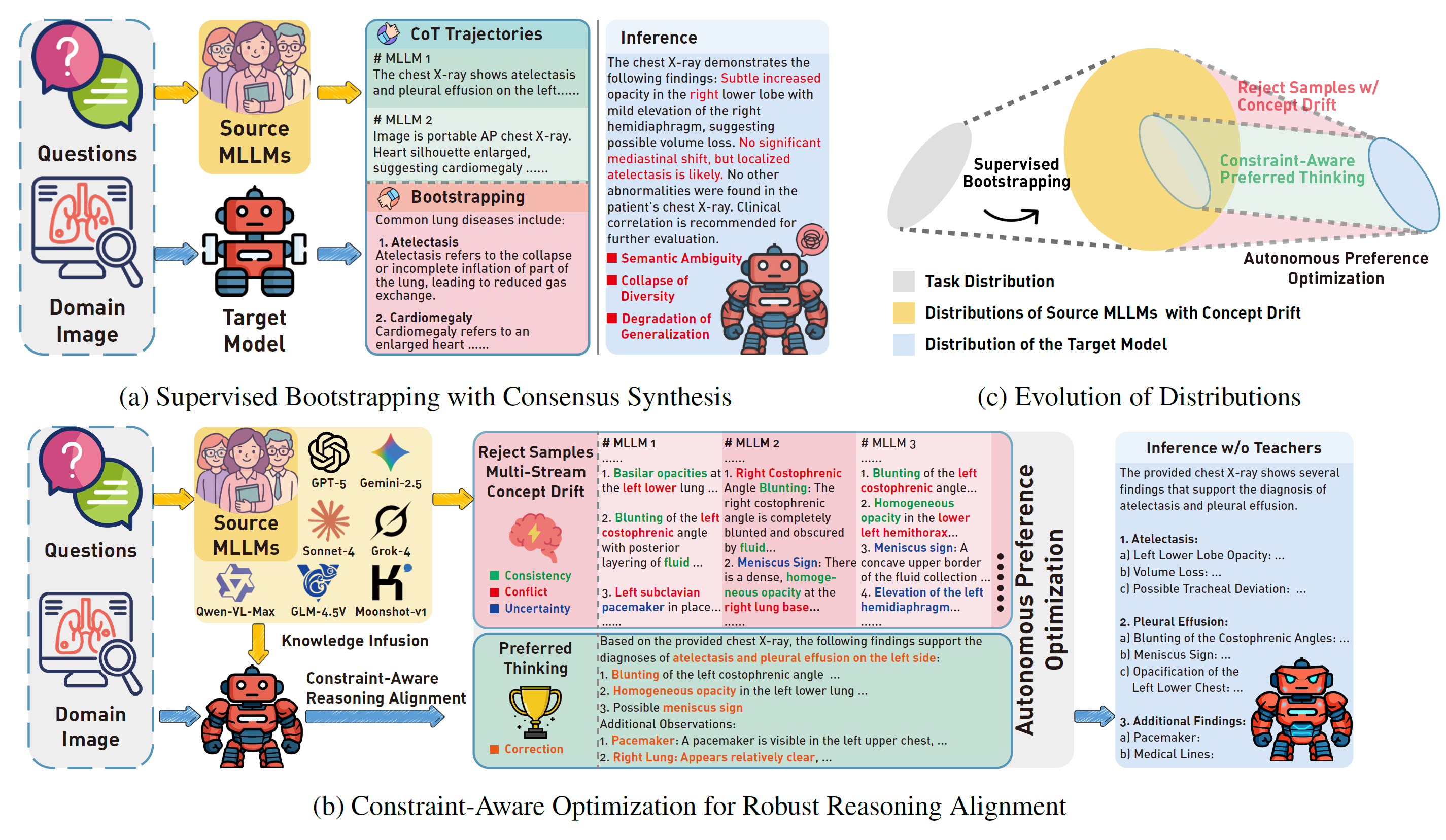

During our initial attempts to align target models using the collective reasoning of multiple state-of-the-art MLLMs, we observed a counter-intuitive phenomenon: simply aggregating their chain-of-thought (CoT) trajectories often degraded the target model's robustness, leading to severe hallucinations and semantic inconsistencies. By systematically tracing the generation processes, we discovered that these errors did not stem from individual source inadequacies, but rather from the fundamentally conflicting reasoning paradigms across different models. This critical observation led us to realize that the multi-source alignment process is not a static knowledge transfer, but a dynamic and non-stationary challenge characterized by inter-model concept drift.

Conceptual illustration of multi-stream drift.

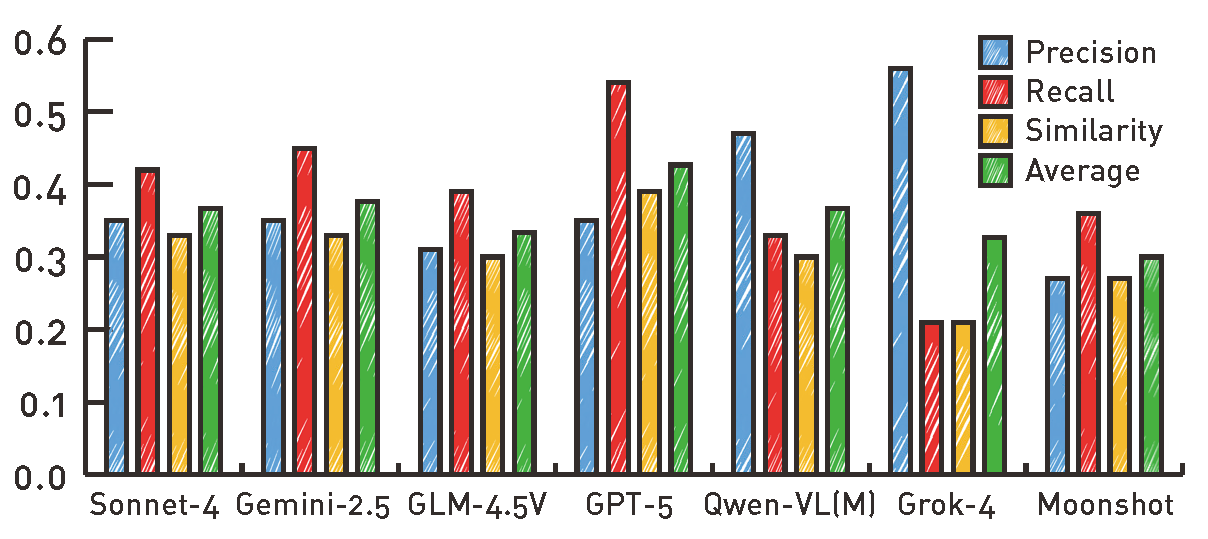

Quantitative measurement of concept drift among source MLLMs.

Distilled Student

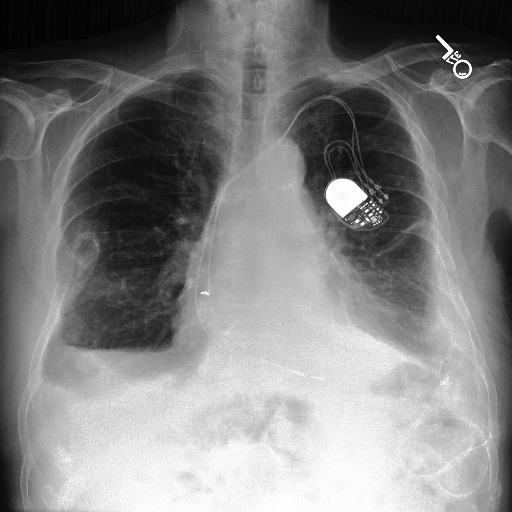

Based on the provided PA chest X-ray, the following key findings support the diagnoses of atelectasis and pleural effusion:

1. Atelectasis:

- Volume Loss in the Left Lower Lung Field: The left lower

lung appears significantly darker than the right,

indicating volume loss.

# Inconsistency: atelectasis is denser (whiter), not darker. - ...

2. Pleural Effusion:

- Bilateral Pleural Opacities:

Both lungs show areas of increased opacity, but they are more pronounced on the

left.

# Overgeneralization: bilateral opacities may have multiple causes and cannot be considered the primary cause. - Possible Pleural Line: There may be a pleural line

visible along the midline, indicating pleural fluid

accumulation.

# Inconsistency: pleural line along the midline usually indicates pneumothorax, not pleural effusion. - No Evidence of Pulmonary

Infiltrates: The lung fields appear relatively clear, with no signs of

pulmonary infiltrates, consolidation, or ground-glass opacities.

# Overconfident: infiltrates may be masked. - ...

Example of drift-biased target model.

Main Observations

- While some models, such as Qwen-VL-Max, adhere to high-precision, concise reasoning distributions, others like GPT-4o favor high-recall, expansive elaboration. This suggests that the "true" reasoning manifold lies within the consensus of these divergent streams, rather than in any single trajectory.

- The target model trained simply to mimic these drifting streams does not automatically synthesize their strengths; instead, it internalizes the union of their biases, resulting in hallucinations and semantic inconsistencies.

How can we autonomously turn drift into constraint, thereby achieving robust reasoning alignment in non-stationary environments?